AI Wellness Coach: Where It Helps Without Replacing Human Care

- Mimic Minds

- 1 day ago

- 8 min read

Can an AI wellness coach actually support your health goals without pretending to be your therapist, doctor, or personal trainer?

That question matters because wellness sits in a tricky middle zone. It touches sleep, stress, nutrition, movement, motivation, and habits. These are everyday needs, but they can also connect to medical conditions, mental health crises, and complex life situations. A good digital coach can offer structure and consistency. A responsible one knows when to step back, point you toward a clinician, and stop short of diagnosis.

In this guide, we’ll break down what an ai wellness coach does well, where it fails, and how to design it ethically so it complements human care instead of replacing it. We’ll also ground this in real production thinking, because in practice, a wellness assistant is not “just a chatbot.” It’s a system with conversation design, guardrails, a persona, and a delivery layer that has to feel safe and human first.

Table of Contents

What an AI Wellness Coach Really Is

An ai wellness coach is best understood as a daily companion for habit change and self regulation, not a clinician. It can:

Offer structured check ins and journaling prompts

Help set goals and break them into realistic routines

Provide habit reminders and gentle accountability

Suggest general wellness education such as sleep hygiene or stress reduction techniques

Track progress signals such as mood ratings, energy levels, step goals, hydration, and sleep schedules

What it should not do:

Diagnose conditions

Replace therapy, psychiatry, or medical care

Recommend medication changes or treat symptoms

Handle crisis situations without immediate escalation pathways

The strongest wellness experiences usually add a face and voice because humans respond to presence. That is where embodied interfaces and conversational avatars become more than aesthetics. A well designed digital human can deliver guidance with warmth, pacing, and emotional intelligence, while still staying within strict safety boundaries. That approach aligns closely with how Mimic Minds frames human first AI communication: empathetic, emotionally aware, and technically credible, without drifting into hype.

If you’re exploring a digital character interface for this exact use case, the Mimic Minds solution built specifically for health aligned experiences is the AI avatar for wellness, designed to deliver guided conversations that feel supportive while remaining consent aware and professional.

Where It Helps Most

The value of an ai wellness coach shows up in repetition. Human professionals are better at depth. Machines are better at consistency. When you combine those strengths correctly, you get support that feels present between appointments.

Here are the highest impact areas.

Habit formation and routines: Daily routines are where most wellness goals succeed or fail. A digital coach can help users set a bedtime wind down ritual, build a walking plan, or create a simple meal rhythm, then keep it alive through check ins.

Sleep and recovery scaffolding: Not medical sleep treatment, but the fundamentals. Consistent schedules, light exposure awareness, caffeine timing, screen boundaries, and relaxation cues.

Stress regulation and micro interventions: Short breathing sessions, grounding exercises, reframing prompts, and reflective journaling can be delivered in a low friction way. The key is positioning: supportive guidance, not therapy.

Motivation and accountability without shame: A good coaching persona avoids guilt loops. It mirrors progress, celebrates small wins, and normalizes setbacks, then guides users back to the plan.

Education in plain language: Users often know what they “should do” but not why it works. A wellness companion can explain the logic behind hydration, protein distribution, recovery days, or nervous system basics in a calm voice.

To scale this responsibly, the assistant needs more than text. It needs a consistent identity, controlled messaging, and safe conversational boundaries. That’s why many teams implement wellness coaching as a branded interactive agent rather than a generic chat tool, using platforms like Mimic AI Studio to shape the persona, scripts, escalation rules, and deployment experience.

Where Human Care Must Stay in the Loop

The line is not philosophical. It’s operational. If your product crosses into diagnosis, crisis counseling, or clinical decision making, humans must lead.

An ai wellness coach should defer to human care in these scenarios.

Mental health crisis signals: Mentions of self harm, suicidal thoughts, panic episodes, psychosis, or immediate danger require crisis escalation, local resources, and a stop to coaching behaviors.

Eating disorder risk: Coaching around weight, calorie restriction, or body image can become harmful fast. This category needs specialized clinical oversight.

Medical symptoms and treatment questions: Chest pain, fainting, persistent insomnia, medication interactions, pregnancy complications, and similar topics are not coaching territory.

Chronic disease management decisions: Lifestyle can support chronic conditions, but care plans should be clinician guided, especially when users ask what to change in treatment.

Complex trauma and grief processing: A system can offer grounding prompts, but anything that resembles therapy should include referral guidance and opt in boundaries.

In well built systems, the handoff is not a dead end. It’s a designed transition: “This sounds important and deserves a real clinician. Here’s how to reach your care team, and here are immediate steps you can take right now that are safe.” That is human first AI in practice.

If you’re mapping wellness into healthcare settings, the most relevant deployment model is typically a supportive front door experience tied to broader care programs. For that pathway, explore the AI avatar for healthcare as a reference point for how conversational interfaces can support education, onboarding, and patient guidance while keeping clinicians in control.

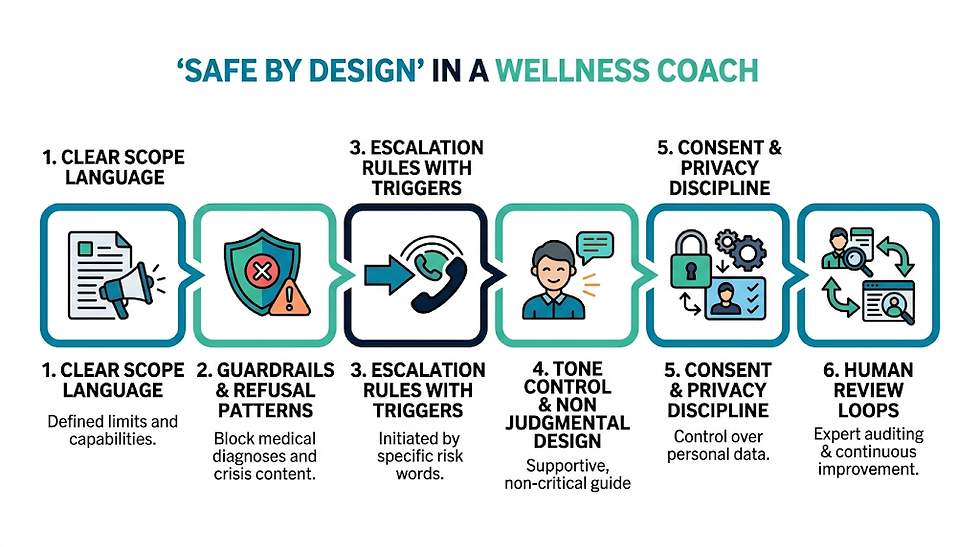

What “Safe by Design” Looks Like in a Wellness Coach

A trustworthy ai wellness coach is built like a production pipeline. You do not simply launch a model and hope it behaves. You shape the system the same way you would shape a film performance: direction, constraints, review, and iteration.

Core design elements that matter most:

Clear scope language inside the experience: Users should know what the coach can and cannot do, in simple human terms.

Guardrails and refusal patterns: The assistant must decline medical diagnosis requests, medication advice, and crisis content, then redirect safely.

Escalation rules with triggers: If users mention certain risk words or patterns, the system shifts into a safety flow with emergency support guidance.

Tone control and non judgmental conversation design: Avoid guilt, fear, or pseudo clinical authority. The assistant should sound like a calm guide, not a doctor.

Consent and privacy discipline: Wellness data is intimate. Users need control over what is stored, what is shared, and what is used for personalization.

Human review loops: A wellness system improves when experts audit conversations and refine prompts, scripts, and limitations.

This is also where embodied interfaces help. With a real time conversational avatar, you can steer pacing, facial expression, and warmth to reduce user anxiety, while also visibly signaling that the experience is a guide, not a clinician. If you’re evaluating deployment options for such experiences, the Agents page is a practical starting point for understanding how branded digital assistants can be structured as controlled systems rather than open ended chat.

Comparison Table

Approach | Best for | Strengths | Limits | Safety notes |

Rule based wellness prompts | Simple routines | Predictable, easy to audit | Feels generic, low personalization | Safer but limited empathy |

Standard chatbot coach | Habit coaching and education | Flexible conversation, scalable | Can drift into medical advice | Needs strong guardrails |

Avatar based ai wellness coach | Engagement and adherence | Presence, warmth, better retention | Requires production effort | Must avoid clinical role play |

Hybrid with human coach oversight | Higher risk populations | Strong safety, tailored support | Higher cost, slower scale | Best for sensitive domains |

Clinician led care with AI support | Medical adjacent care | Clinicians remain primary | Operational complexity | Highest accountability |

Applications Across Industries

Wellness coaching is not confined to consumer apps. The same interaction patterns show up anywhere people need ongoing support, education, and motivation.

Corporate wellbeing programs for stress, sleep, and burnout prevention

Insurance member engagement for lifestyle education and adherence support

Fitness platforms delivering structured routines and recovery guidance

Senior care support for mobility reminders, hydration, and daily check ins

Hospitality experiences that encourage healthier travel routines such as sleep and movement

Sports and performance programs supporting consistent training habits and recovery rituals

Rehabilitation adjacent programs where motivation and routine matter, while clinicians supervise

If you want to see how Mimic Minds frames deployment across verticals, the Industries overview is useful for mapping where a digital coach fits naturally, from employee wellness to health adjacent services.

Benefits

The best reason to use an ai wellness coach is not novelty. It’s continuity.

Key benefits when designed responsibly:

Consistent support between human appointments

Scalable habit coaching without clinician burnout

Personalization based on user preferences and patterns

Lower barrier to starting wellness routines for beginners

Reduced friction through guided micro steps rather than big lifestyle overhauls

A calmer user experience when delivered with an emotionally aware voice and persona

Future Outlook

Wellness is heading toward real time, multimodal coaching. Not in a sci fi sense, but in a practical one: voice, facial expression, simple biometric inputs, and more context aware conversations.

We’ll likely see:

Real time conversational digital humans that feel present while staying strictly non clinical

Better personalization through structured memory that users control

Tighter integration into care pathways where AI handles education and routine, while humans handle diagnosis and treatment

More transparent safety layers that clearly show what an assistant is allowed to do, and why

Stronger consent frameworks and audits, especially as wellness products intersect with healthcare regulation

The teams that win will treat the assistant like a produced experience, not a model demo. That means careful persona direction, consistent dialogue design, and a delivery layer that can be deployed reliably across web, mobile, kiosks, and enterprise contexts. For larger organizations evaluating rollout and governance, the Enterprise page is the most relevant hub for understanding how these systems are typically structured at scale.

FAQs

Is an ai wellness coach the same as a therapist?

No. A wellness coach supports habits, routines, and general education. Therapy treats mental health conditions and should be led by licensed professionals.

Can an ai wellness coach give medical advice?

It should not. A responsible system can share general wellness information but must avoid diagnosis, medication guidance, and symptom interpretation.

What should I look for in a safe wellness coach app?

Clear scope, transparent limitations, crisis escalation guidance, privacy controls, and a tone that avoids clinical authority or shame based motivation.

Can an ai wellness coach help with anxiety?

It can support stress management techniques such as breathing or journaling, but ongoing anxiety or panic requires professional care, especially if symptoms are intense or persistent.

How does an avatar based coach improve results?

Presence increases adherence. People engage longer with a consistent face and voice, especially when the persona is calm and emotionally aware, as long as the product stays within safe boundaries.

Will AI replace human wellness coaches?

Not in the work that requires deep empathy, clinical judgment, or complex life context. AI can handle consistency and reminders, while humans provide depth, nuance, and accountability.

Is my wellness data private?

That depends on the product. Look for explicit consent controls, clear data retention policies, and options to delete or export your information.

Can a wellness coach be used inside healthcare programs?

Yes, when positioned as supportive education and routine coaching, with clinicians supervising medical decisions and escalation pathways.

Conclusion

An ai wellness coach is most powerful when it stays humble. It’s a steady companion for routines, reflection, and small daily choices. It becomes harmful when it tries to play doctor, therapist, or diagnostician.

The future belongs to wellness systems that are produced with care: a grounded persona, clear boundaries, and safe escalation paths, delivered through interfaces that feel human without claiming to be human. When you design that way, AI does what it’s best at, consistency, clarity, and availability, while human care remains exactly where it should be: in charge of health decisions that truly matter.

For further information and in case of queries please contact Press department Mimic Minds: info@mimicminds.com

Comments