AI Avatar Keynote Speakers: Can a Digital Human Steal the Show at Your Conference?

- Mimic Minds

- 3 days ago

- 8 min read

What if your next keynote speaker never misses a cue, speaks every language your audience needs, and can deliver the same story flawlessly in Mumbai, Dubai, and New York on the same day?

That is the real conversation behind AI avatar keynote speakers. Not a gimmick. Not a novelty act. A new kind of stage presence built from performance craft, real time rendering, and conversational intelligence. When designed properly, a digital human can carry a conference moment with the same gravitas as a human presenter, while unlocking formats a traditional keynote cannot.

This article breaks down what it actually takes to put a believable AI driven speaker on stage, where it shines, where it fails, and how to decide if a virtual keynote fits your event’s intent, budget, and audience expectations.

Table of Contents

What an AI Avatar Keynote Speaker Really Is

An AI avatar keynote speaker is not just a face on a screen with a scripted voiceover. The credible versions are built like screen characters, then deployed like live systems.

At a practical level, a stage ready digital human usually blends four layers:

Visual identity and character design: facial likeness, wardrobe, micro details that read well on LED walls

Performance layer: motion capture, keyframe animation, or live puppeteering for body language

Voice system: studio quality text to speech, or a cloned voice with explicit consent and contractual control

Intelligence layer: scripted talk plus optional conversational AI for audience questions, booth interactions, or moderated panels

If your event needs a polished keynote that lands like cinema, the avatar behaves like a performer. If your event needs Q and A, it behaves like a hosted AI system with guardrails.

For teams evaluating a complete build and deployment workflow, the most direct overview is the platform level view inside the Mimic AI Studio, because it frames the avatar as a controllable production asset rather than a one off demo.

Why Conferences Are Exploring Digital Human Speakers

Conference programming is under pressure. Audiences want spectacle, clarity, and substance, without long windups or generic slides. Meanwhile organizers are balancing cost, logistics, speaker availability, and global reach.

A well built AI avatar keynote is being considered because it can solve specific event problems:

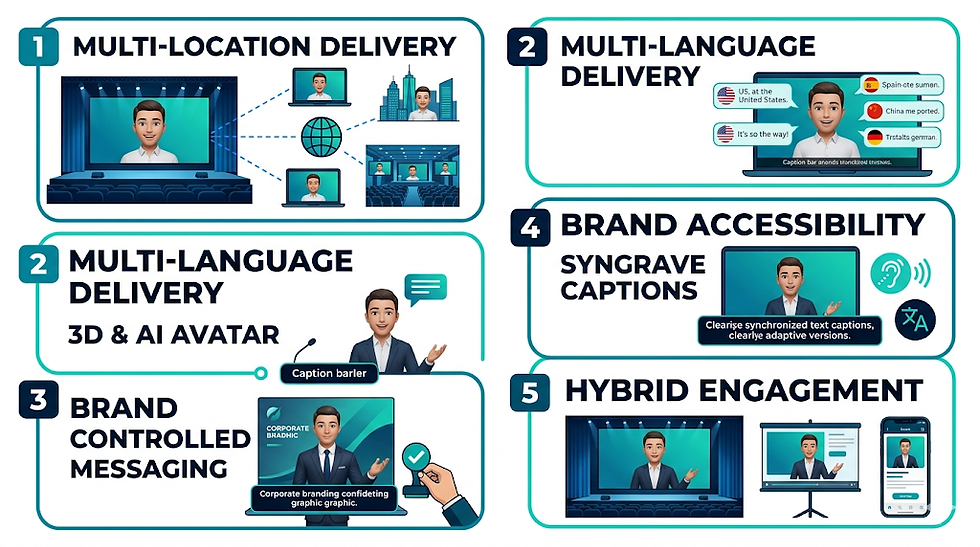

Multi location delivery: one keynote, multiple venues, consistent tone and timing

Multi language delivery: localized voice and subtitles without losing the speaker’s cadence

Brand controlled messaging: fewer variables than a guest speaker touring ten cities

Accessibility: clearer pacing, on screen captions, and adaptive versions for different audiences

Hybrid engagement: the same character can appear on the main stage, then in breakout rooms, then in the event app

The important nuance is this: the show stealing version is not the most futuristic one. It is the one that respects conference rhythm. Strong opening. Clear narrative arc. Designed pauses. Visual emphasis that works on a massive screen. You are still directing a performance.

If your keynote is tied to a commercial announcement or a partner narrative, the most common fit is an avatar designed for enterprise style communication, similar to what the AI avatar for business use case focuses on.

The Production Pipeline Behind a Stage Ready Digital Human

If you want a digital human to hold attention for twenty minutes on a conference screen, you have to build it like you would build a lead character for film, with event engineering layered on top.

1 Identity and likeness decisions

First decide whether the keynote avatar represents:

A real person: CEO, founder, spokesperson, or expert

A fictional brand character: designed mascot with human credibility

A hybrid presenter: stylized but still grounded in realistic performance

Real person likeness demands strict consent, usage scope, and revocation policies. The audience should never feel tricked. That trust dynamic matters more on stage than in a marketing video.

2 Asset creation: model, textures, and rig

A stage asset must survive close ups and wide shots.

Typical build steps include:

High resolution head model and scan based detail or sculpted realism

Physically plausible skin shading and eye rendering tuned for LED walls

Facial rig for speech shapes, emotion beats, and micro expressions

Body rig that supports natural posture shifts, gestures, and turns

If your conference audience includes creators, engineers, or VFX professionals, they will subconsciously evaluate the rig. Bad facial weights and dead eyes break belief instantly.

3 Performance: mocap, animation, or live control

Keynote delivery is rhythm. That rhythm comes from performance capture decisions.

Common approaches:

Pre captured performance: full body and face capture for a locked talk

Hybrid: captured base performance with hand keyed refinements for emphasis

Live puppeteering: operator driven body language synced to speech for dynamic segments

For most conferences, the best result is pre captured with selective refinements. It reduces risk and keeps the pacing cinematic.

4 Voice: studio quality, not “AI voice”

Audience tolerance for synthetic speech is improving, but conferences are unforgiving. A keynote voice must feel intentional.

Best practice looks like:

Scripted voice recorded by a real actor, or consented voice model built from clean sessions

Direction for emphasis and breath so it does not feel like an audiobook

Audio mastering for venue acoustics and large speaker systems

5 Intelligence layer: scripted first, conversational second

The keynote itself is usually scripted. Conversation is optional and must be controlled.

If you want the avatar to answer questions, the safest approach is a moderated Q and A where:

Questions are filtered

The avatar is grounded to approved knowledge sources

The system has refusal behavior for unsafe or off topic prompts

A human producer can intervene

If you are building the system to interact beyond the main stage, explore a structured agent approach through AI agents, where the avatar becomes one interface for a governed knowledge and action layer.

Real Time Delivery Formats for Events and Keynotes

There is no single “right” way to deploy a digital human keynote. The format depends on your venue, your risk tolerance, and whether you want interaction.

Common event formats include:

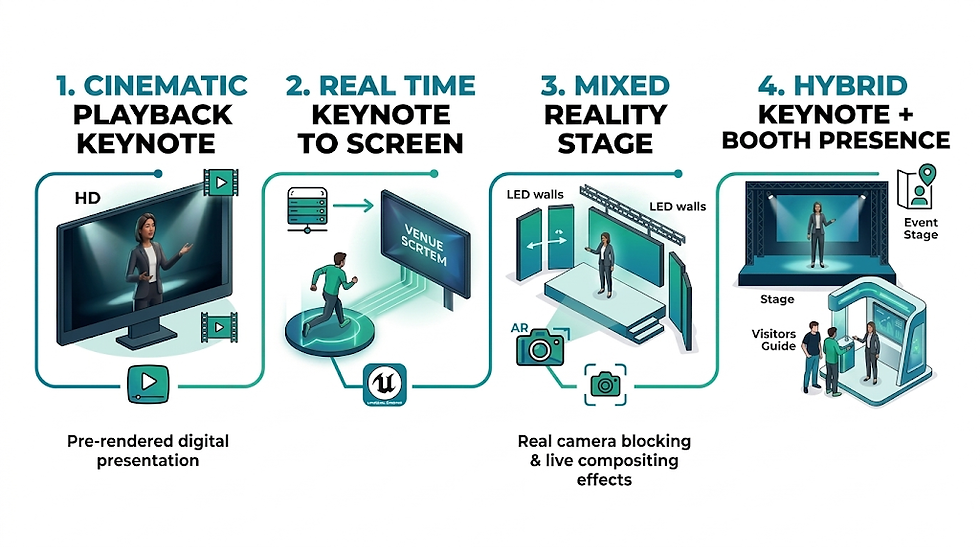

Cinematic playback keynote: pre rendered or real time rendered video played like a film segment

Real time keynote to screen: avatar runs in a real time engine and outputs to the venue screen system

Mixed reality stage: avatar appears on LED walls with real camera blocking and live compositing

Hybrid keynote plus booth presence: the same character appears on stage, then later becomes an interactive event guide

If you are also running gaming adjacent events, esports programming, or creator focused conferences, a stylized but credible approach can be more culturally native. That is where an AI avatar for gaming style of character language often performs better than pure corporate realism.

Comparison Table

Approach | Best For | Strengths | Risks | Typical Production Needs |

Human keynote speaker | Prestige and authenticity | Natural presence, live improvisation | Travel, availability, inconsistency | Speaker coaching, AV rehearsal |

Pre produced avatar keynote | Big reveal moments | High polish, repeatable, cinematic | No live Q and A | Character build, animation, mastered audio |

Real time avatar keynote | Hybrid and multi venue | Live timing control, adaptable | Technical failure risk | Real time engine, operator, venue integration |

Avatar plus moderated Q and A | Thought leadership at scale | Audience interaction with control | Requires governance and careful design | Agent layer, safety rules, rehearsal |

Panel with avatar co host | Entertainment and pacing | Keeps panels tight, bridges segments | Can feel gimmicky if poorly written | Scriptwriting, timing, character direction |

Applications Across Industries

AI avatar keynote speakers are not limited to tech conferences. The strongest applications are where clarity, repeatability, and scale matter.

Enterprise events and product launches: consistent global messaging and localization

Education and training conferences: structured teaching segments and repeatable workshops

Healthcare and wellness summits: patient friendly explanations when tone and empathy are designed intentionally

Fashion and retail events: virtual hosts that can present collections and interact with attendees

Sports and fan experiences: digital hosts for halftime segments, fan Q and A, sponsor activations

Mobility and robotics conferences: futuristic presentation formats that still communicate engineering detail

If your event is in a regulated or high trust domain, choose a use case framework built for that audience. For example, a clinical or patient facing keynote needs a different tone and compliance posture, aligned with AI avatar for healthcare. A mindfulness or therapy adjacent summit should treat the avatar as a calm interface, more like AI avatar for wellness.

Benefits

A digital human can steal the show when the goal is not to replace people, but to deliver a moment people remember, with fewer constraints.

Key benefits conference teams typically care about:

Reliable delivery across multiple venues and time zones

Faster localization with consistent performance quality

Brand safe messaging with controlled updates

Clear accessibility options: captions, pace variations, and language tracks

Reusable asset value: the same avatar can become an event guide, onboarding host, or training presenter

Creative staging: impossible camera moves, transitions, and visual metaphors that reinforce the story

Most importantly, an avatar keynote can turn your conference from a series of talks into a directed experience, closer to virtual production than a standard presentation.

Future Outlook

The next wave will not be about making avatars “more human” in a generic sense. It will be about making them more stage literate.

Expect improvements in:

Real time facial nuance: better eye behavior, better mouth interiors, more natural micro movement

Safer conversational layers: better grounding, better refusal behavior, better moderation tools

Personalization at scale: one keynote delivered in many versions without losing authorial intent

Integration with event platforms: avatars that appear consistently across screens, apps, kiosks, and broadcast feeds

Hybrid pipelines: teams will mix motion capture, real time engines, and AI assisted editing the same way modern virtual production mixes practical and digital

As this matures, the most valuable skill will be direction. Writing. Performance. Timing. Digital humans will become another kind of stagecraft, and the conferences that win will treat them like performers, not like software.

For organizations exploring whether they need a platform level deployment or a one time build, the broader ecosystem view on Enterprise solutions can help frame what governance, scalability, and rollout typically look like.

FAQs

1. Can an AI avatar keynote speaker replace a human keynote?

It can replace certain formats, especially scripted keynotes built around announcements, education, or consistent brand messaging. For high stakes thought leadership, many events use avatars alongside humans rather than instead of them.

2. What makes an avatar keynote feel believable?

Believability comes from performance direction, facial rig quality, eye behavior, and sound design. A technically perfect model with weak acting still feels empty on stage.

3. Is it possible to do live Q and A with a digital human?

Yes, but the best practice is moderated Q and A with a governed knowledge base, clear refusal behavior, and human producer oversight.

4. How long does it take to build a stage quality digital human?

Timelines vary based on realism level, whether a likeness is involved, and whether you need interactivity. The fastest path is usually a scripted keynote with a controlled performance pipeline.

5. What are the biggest risks for conferences?

Technical integration issues, uncanny facial motion, and unclear audience expectations. The safest deployments are rehearsed like a show and designed with transparency.

6. Do attendees react positively to avatar keynote speakers?

They do when the avatar is framed as a deliberate creative choice and the content is strong. If it is used as a novelty, audiences move on quickly.

7. How do you handle ethics and consent for a real person likeness?

You need explicit consent, defined usage scope, voice and face rights, and a mechanism to revoke or limit usage. Trust matters more than spectacle.

8. What is the best first use of a digital human at an event?

A short opening segment, a transition host between sessions, or a keynote that is scripted and rehearsed. Once the audience trusts the character, you can expand into interactive formats.

Conclusion

A digital human can absolutely steal the show at your conference, but only if you treat it like stagecraft. The keynote must be written with intention, performed with believable rhythm, and delivered through a pipeline that respects both cinema quality and live event reliability.

When it works, it does something traditional keynotes struggle to do. It becomes a repeatable, global, controllable performance asset that can live beyond one day on a stage. It can open your event with precision, carry complex narratives with clarity, and reappear later as an event guide, educator, or interactive host.

The organizers who win with AI avatar keynote speakers will not be the ones chasing novelty. They will be the ones directing a real performance, built with the same care you would bring to a hero character in VFX, only tuned for the unforgiving honesty of a conference screen.

For further information and in case of queries please contact Press department Mimic Minds: info@mimicminds.com

Comments